Table stakes, KTLOs and Differentiators

How to be competitive, how to disrupt and how to see you're getting disrupted

I've spent quite some time now thinking about product strategy - both from an individual product's perspective and from a product portfolio level. One of the topics I have tended to ponder a lot about was about the types of work required for products to make it successful. I developed a whole set of terms and quasi-theory about it, and as usual, apparently others have already thought of (most of) it.

So much so, that the terms that I came up with were the exact terms used by others already! So what I'll do here is explain the terms and link to previous writing on it, and then expand on it a bit (hopefully others haven't thought of that first too!).

So before I go on, I'd like to give huge credit and a recommendation to check out Amit Ranadive's article on this topic. It really freaked me out how I independently thought of all this and then found out that how so much of what I thought had already been covered in Amit's article from 2016.

Despite that, I still decided to write my own article on it, because I still do have some thoughts which are not covered in Amit's post, and I wanted to explain things from my perspective and in my own way.

Table stakes

These are capabilities or features that are basic expectations in order to provide be taken seriously in the market. If you don't offer these capabilities, then there is little chance of adoption and existing users might quickly churn. These things are not unique to your product, but if your product doesn't offer it, then nobody will consume it. They are highly valued by customers, but if you talk to them, they might not even mention these features sometimes - but they would definitely notice it (and complain) if you don't have these (or if you remove these features).

This is the kind of work without which we won’t even be considered for the job, or would be quickly ‘fired’ (partners leave). For some products like an SDK it could mean stuff like having a stable codebase (i.e, doesn't crash), which doesn’t make things slow (low memory footprint). For APIs it might be certain standard of uptime, ability to do basic CRUD operations etc. For some other kind of products pricing (e.g, having a free tier) might be a table stakes given the competition. For some other kinds of products it might be things like legal, regulatory or other standards compliance like GDPR, HIPAA or WCAG compliance etc. These don't set you apart, but if you don't have them, then depending on the product and the market, you might not even be considered by customers in the first place.

KTLO (Keep the lights on)

KTLO (Keep the lights on) work is work needed to just keep the product running. Without this, the product will literally stop working (or will not work as well as we want). The interesting thing is many (if not most) of these will either be invisible to the end-customer, or they won't notice it (if you're doing a good job) - but they are necessary for the product teams to develop and maintain. If you're dropping the ball on these types of efforts, then the customer will eventually notice it. It might even call the integrity of the product in question.

For example, your app might be scaling quickly and after a point of time your engineering team decides that major rewrites, a new architecture or new infrastructure is needed, and if not, then there is a scope for major crashes or scaling issues down the line. These changes typically happen under the hood and end consumers won't know about it unless explicitly told. If your product is in the fintech space, then perhaps fraud prevention would be a something where you might want to spend a lot of effort in just to keep the light on.

Differentiators

These are capabilities or features that are the reason why your customers choose you specifically. These are competitive advantages. If you don't work on offering at least a few of these, then there is little chance of customers choosing your product even if you're doing all the basics (AKA table stakes) right.

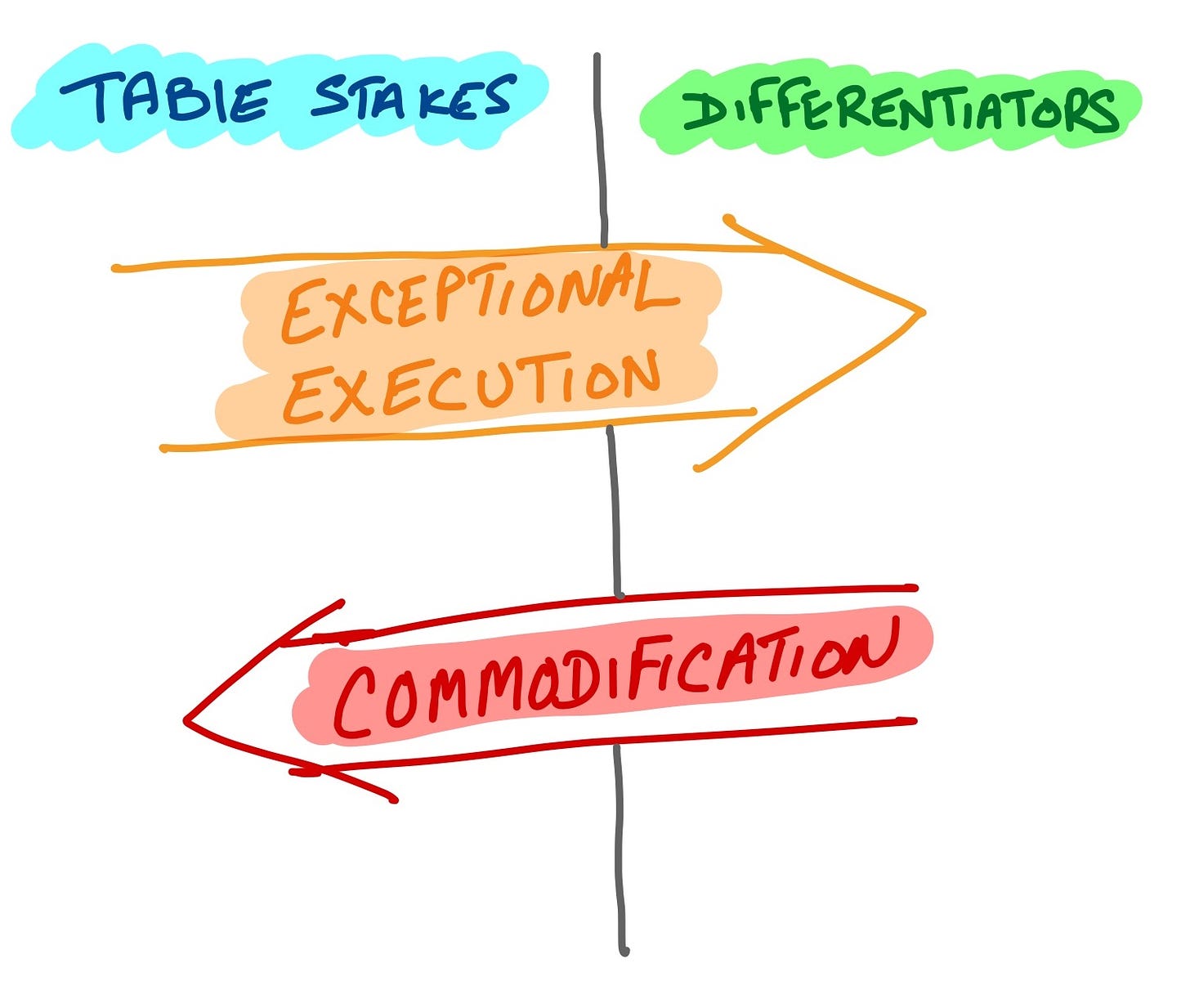

Now, the interesting part. These categories you have made and filled out are not going to be set in stone. Depending on market conditions, audience perception, regulatory environments, and your own execution of said capabilities, they can move around and be fluid. They can jump from differentiators to table-stakes or even vice-versa. This can result in some interesting ways to look at your (or your competitions') products.

How to increase your chances of success

So now, you have a list of features or capabilities for your product divided up into Table Stakes and Differentiators.

Keep in mind that differentiators are competitive advantages - the reasons why customers choose you specifically. Table stakes are just the minimum stuff required in order to part of playing field to be considered in the first place.

So what you want to do is to get as many of your table stakes capabilities, and make them differentiators as well. How do you do it? Great execution. Let's see a few examples of products which have done exactly that.

Let's take GMail as an example. Every email service offers users storage space to store their emails, but GMail executed on it to so well, that it became a differentiator. At that time, email services (especially free ones) were providing storage space in the range of a few MBs, where GMail promised 1 whole GB!

Another thing was spam detection. They were not the only email service doing that, and even at that time was kind of expected to some degree amongst email service providers, but GMail's spam detection just took to another level and that also became a differentiator.

This is also how you can approach disrupting an incumbent - i.e, its not always necessary for a differentiator to be an absolutely original offering not found anywhere else. You can implement a thing which is found everywhere else too, but just execute on it so well that you're known for it and people choose you - thus taking a table stakes functionality and turning it into a differentiator.

How to notice that you are being disrupted

Clayton Christensen (one of my heroes) has written extensively on disruption theory and I have used that quite a bit to to analyse things in the past. However, you could also view it through the lens of table stakes and differentiators.

If more and more of your list of differentiators are moving over to the Table stakes column, then thats a warning sign that you're about to be disrupted through commodification. Think about it - If more and more of your differentiators are becoming industry standard table stakes, then your solution is more and more becoming a commodity and there is less and less reasons for customers to choose you specifically.

You can make table stakes features into differentiators by elevating your execution relative to the competition. On the other hand, if enough competitors copy and have similar levels of execution to your differentiating offerings, then it becomes a commodity and no longer is unique to you, thereby becoming table stakes for the industry.

Let's take the example of browser rendering engines (this is basically the set of components which turn website code into what you see and interact with in browsers). Back in the day, almost all major browsers had their own rendering engine. Internet Explorer's was called 'Trident', Safari was based on 'Webkit', Opera was based on 'Presto' and Mozilla's was based on 'Gecko'.

The rendering engine was seen as a differentiator, but with time, some browsers realised that the rendering engine was just a commodity for them, and not something that people were choosing their browser specifically for. Instead, the actual differentiation lay more in other features (Overall UI, Tab Handling, Privacy and Content-Blocking, etc). The rendering engine was becoming more of a commodity, and for smaller players it was increasingly costly to maintain and grow the engine when they could use their resources on actual differentiators which mattered to them. With time, Opera ditched their own rendering engine and adopted webkit (and later switched to blink/chromium).

The Microsoft Edge browser (the successor to Internet Explorer) ditched the trident rendering engine and also is based on Chromium, and so are newer browsers like Vivaldi, Brave etc. All of the browsers based on chromium now have tried to differentiate mostly on user facing aspects (tab handling, ad blocking, general UI enhancements, etc).

For your own products, it might be good to think about:

Which of your product features are table stakes and which are differentiators?

Which are efforts that should be considered KTLOs?

Can some of the table-stakes features be turned into differentiators? What would it take to do so? How can you validate that with customers?

Have some of your differentiators over time became table stakes in the industry you operate in? Do you see more of that happening? What could you do to prevent that?

Thinking about these questions might be a worthy exercise to do from time to time.